|

What AI means to me and Aiko?

What is AI (Artificial Intelligence)? Many people think that AI is the ability of a mechanical object, such as a robot, to converse with a human being. I think this definition is incomplete. The meaning of AI should include the capacity for the robot to have senses similar to humans. AI should include the ability of speech, hearing, conversation, vision, object recognition along with interaction with objects and surroundings such as a sense of danger or caution in unfamiliar areas.

Currently, Aiko can have simple conversations with another human being. When I say simple, she can converse at a level similar to a 5 years old.

Aiko already has facial recognition capabilities and can distinguish different human expressions.

Aiko can identify human body movements and trace motion to detect what we are doing.

Aiko can sense danger and be mindful of her surroundings (People hurting her).

Aiko can scan her surroundings and can recognize what object(s) is/are most important such as medications and understanding the type of medication.

For instance, if you put a chair in front of Aiko, her AI

can recognize that the chair is the foreground and decide to disregard any

information from the background.

If a person happens to stand beside the chair, Aiko’s AI

will change focus to make the person the point of interest. The chair will be

treated like the background and ignored.

If two people stand beside the chair, Aiko will be aware of

the second person, but remain focused on the first person. If the second person

engages in a conversation with Aiko, then the AI is intuitive enough to focus

primarily on the speaking person.

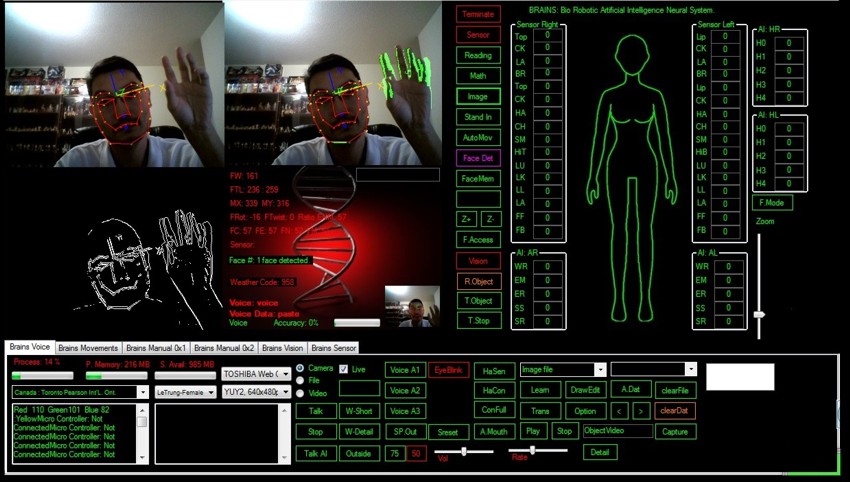

As you can see from the latest BRAINS software upgrade,

there are 3 vision systems.

The main vision system (main top left) recognizes the person’s

face and facial expressions. It also identifies 3D objects. The system tracing

human motion (top right) identifies any movement in light green. There is a light green area around my right hand as I wave it and the

right side of my body which moves with my hand. The third system (bottom left)

detects the point of interest. Notice that the AI ignores the chair and

anything else in the background. The AI focuses on the most important “object”

in the room, in this case, me who is moving my hand.

As of today, the BRAINS software is being updated slowly. This is simply a hardware limitation as the CPU load is

currently maxed out at 99%. The only way that I can continue development of the

Aiko’s BRAINS software is to upgrade the CPU to quad cores or hex cores which

currently is beyond my budget.

So unfortunately until I can secure proper funding, I will

be unable to advance and expand the BRAINS software for the near future.

My point is a true AI should contain all human

traits not just conversation. The BRAINS software is not even close to human

AI, but it's a start of something wonderful.

The current BRAINS

software is no where close to the human AI that I envision, but I am determined

to someday reach that goal.

BRAINS version 1.5

Regards,

Le Trung

If you any questions about Project Aiko, please do not hesitate to email to us.

info@projectaiko.com

|

![]()