|

What AI means to me and Aiko?

What is AI (Artificial Intelligence)? Many people think that AI is the ability of a mechanical object, such as a robot, to converse with a human being. I think this definition is incomplete. The meaning of AI should include the capacity for the robot to have senses similar to humans. AI should include the ability of speech, hearing, conversation, vision, object recognition along with interaction with objects and surroundings such as a

sense of danger or caution in unfamiliar areas.

Currently, Aiko can have simple conversations with another human being. When I say simple, she can converse at a level similar to a 5 years old.

Aiko already has facial recognition capabilities and can distinguish different human expressions.

Aiko can identify human body movements and trace motion to detect what we are doing.

Aiko can sense danger and be mindful of her surroundings (People hurting her).

Aiko can scan her surroundings and can recognize what object(s) is/are most important such as medications and understanding the type of medication.

For instance, if you put a chair in front of Aiko, her AI

can recognize that the chair is the foreground and decide to disregard any

information from the background.

If a person happens to stand beside the chair, Aiko’s AI

will change focus to make the person the point of interest. The chair will be

treated like the background and ignored.

If two people stand beside the chair, Aiko will be aware of

the second person, but remain focused on the first person. If the second person

engages in a conversation with Aiko, then the AI is intuitive enough to focus

primarily on the speaking person.

Aiko's Technology

With our current technology it is impossible to design software and hardware to mimic all systems of life. However, I have tried my best to designing an artificial intelligence system which uses both dynamic software and hardware linked together to mimic part of human behaviour. By using Bio Robot Artificial Intelligence Neural System (aka B.R.A.I.N.S) software together with a custom designed Humanoid (aka Aiko), we hope one day we will make an android as close to a human as much as possible.

The differences between robot and human are that we have feelings and emotions and robots do not, but we can start by building a robot that looks human, mimic human behavior, and interact with the surroundings.

Aiko has the ability to talk and interact with human (13,000+ sentences). Aiko can read books, newspapers (print font size at least 12 pts). She has the ability to solve math problems displayed to her visually. Aiko has the ability to see color. In other words, she can also recognize simple foods such as Hot Dogs, Hamburgers, Sandwiches and even toys. Aiko has the ability to recognize the faces of family members, or Aiko

can be programmed to activate defense mode when it does not recognize the person’s face in the house such as in the case of an intruder. When you are about to go outside, Aiko can tell you to bring an umbrella if it is going to rain or wear warmer clothes if it is windy.

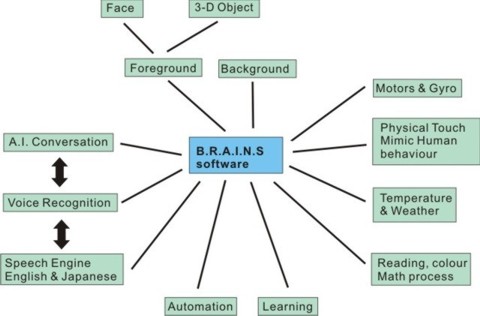

Software B.R.A.I.N.S

The software is programmed in C, C# and Basic, and is constantly updated.

BRAINS software controls speech, reading, math, vision, colors,

hearing, automation and sensors. It controls reading temperatures

to face and 3-D object recognition. In other words, it is literally the brain and

heart of Aiko. In additional to controlling Aiko’s functions,the

software can control Robot kits such as Kondo KHR2 through voice

activation, automation and basic recognition. The BRAINS software is

designed to interact with the surrounding environment, process it, and

record the information into its internal memory. Once the internal

memory is at full capacity, the information can be transferred into the

server data base. The information

can be shared for current and future robots. There are few things that no matter what I do, the software can not give Aiko an Emotion or a Soul. The BRAINS software is not even close to human AI,but as with any journey, one step at a time.

The Bio Robot Artificial Intelligence Neural System (BRAINS) is copyrighted by Trung Le under Canadian Intellecutal Property Office.

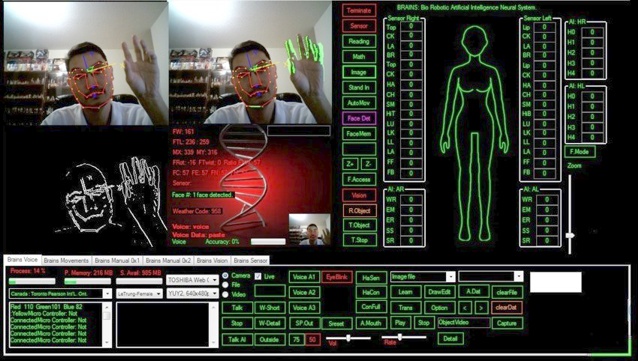

The main vision system (main top left) recognizes the person’s

face and facial expressions. It also identifies 3D objects. The system tracing

human motion (top right) identifies any movement in light green. There is a light green area around my right hand as I wave it and the

right side of my body which moves with my hand. The third system (bottom left)

detects the point of interest. Notice that the AI ignores the chair and

anything else in the background. The AI focuses on the most important “object”

in the room, in this case, me who is moving my hand.

A true AI should contain all human traits not just conversation.

The current BRAINS software is no where close to the human AI that I envision,

but I am determined to someday reach that goal.

As of today the only Company ever to use the BRAINS software are:

BRAINS Full Version:

Aiko

Brains Lite Version:

Work with Kumotek to design key elements for Interactive Robot

Intel Robot Arti. (Intel Arti - Kumotek)

Atom (Kumotek Interactive Robot for Nano TX 2008)

- specifications

- can control camera with IEEE 1394 interface and/or USB

- can control upto 32 sensors*, including gyro and acceleometer.

- control micro controller, servo motors

- control automation

- control speech and reading recognition

- control math and color recognition

- control motion recognition

- control face recognition, and 3-D Object recognition

- control AI conversation

- control interaction with the surrounding including physical touch and the weather

Hardware

Height 152cm Bust: 82cm Waist: 57cm Hip: 84cm

Aiko V2 will have improved motors, sensors, silicone and microcontrollers.

Microprocessor: V1:LS372 and C7 Board, V2:intel i7

Micro Controller: 8*

Gyro and acceleometer: 8*

Sensors: 24*

120G SSD with 8G Ram

Central Data 1000-1500Gig

Power source: LiPo 7.2V and 11.1V

Motors: 72*

Motors speed: Max 0.20sec at 90 degree

2cameras, 1x1ccd (1x3ccd)

Control by Internal 64 bits OS

*To be change

|

![]()